In a Nutshell

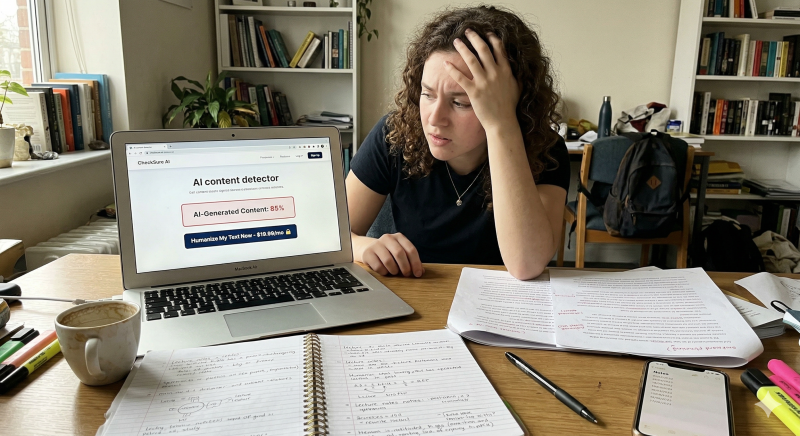

Investigative reporting by AFP found that a new wave of dubious "AI detector" tools are deliberately flagging human-written content as AI to sell "humanization" fixes. These tools—including JustDone AI, TextGuard, and Refinely—flagged passages from a 1916 Hungarian literary classic and even total gibberish as being high-probability AI. If you have recently received a high AI-content score on your own original work, you may be the target of a manufactured urgency trap.

This "pay-to-humanize" scam exploits your fear of academic or professional consequences. You are led to believe your work is "tainted" by AI, and only a paid service can save your reputation.

Fraudulent detectors often use a pre-set script that returns a high AI score regardless of what you actually type. You can expose this by pasting a string of random characters or a well-known historical text into the tool. If the software claims your gibberish is "88% AI content," the tool is a programmed lie designed to trigger panic.

Real AI analysis requires massive computational power and an active server connection to function. Try running the tool while your internet is disconnected to see if it still generates a "score." If it works offline, it is likely running a simple local script rather than conducting a genuine analysis of your writing patterns.

The primary goal of these sites is not accuracy, but the monetization of your anxiety through a "humanize" button. Once the tool generates a high AI score, it immediately presents a paywall offering to "scrub" the AI traces for fees reaching $9.99 per use. You should never pay to fix a problem that does not actually exist in your original writing.

In the AFP investigation, JustDone AI flagged a report on the US-Iran conflict as AI-generated simply to redirect the user to a payment screen. These platforms rely on "manufactured urgency"—the psychological pressure to pay immediately to avoid a perceived threat. This tactic turns a simple writing tool into a high-pressure sales funnel.

If you do use a "humanization" service, the results are often what academic researcher Debora Weber-Wulff calls "tortured phrases." These tools use synonyms that are technically correct but contextually nonsensical, creating a "word salad" that sounds like a machine trying to mimic a person. You will find that these services often replace clear, professional language with unrelated jargon or awkward alternatives.

Legitimate writing never needs to be "humanized" by an automated tool. When a scam tool "scrubs" your text, it is simply garbling your words to bypass the same poorly coded detection algorithms that flagged you in the first place. This creates a cycle of dependency where you pay to break your own writing.

Scammers frequently claim to be partnered with prestigious universities to build unearned authority. Some tools claim associations with Cornell University—a claim that the institution has explicitly denied. You should treat any tool that relies on "borrowed prestige" from famous universities as a major red flag.

These fake scores contribute to the "liar’s dividend"—a disinformation tactic where authentic content is dismissed as a fabrication. This erodes trust between students and educators, as legitimate work is wrongly accused of being AI-generated. Always check the "About Us" section for verifiable contact information and a transparent explanation of the tool's methodology.

It is important to remember that skepticism is your best defense against these digital traps. If a tool demands payment to fix a "high AI score" on work you know you wrote yourself, it is a scam. You should report these fraudulent platforms to the FTC at reportfraud.ftc.gov to help protect other writers and students.

Sometimes a high AI score is often a sales pitch in disguise, not a reflection of your work.

Adam Collins is a cybersecurity researcher at ScamAdviser who operates under a pseudonym for privacy and security. With over four years on the digital frontlines and 1,500+ days spent deconstructing thousands of fraud schemes, he specialises in translating complex threats into actionable advice. His mission: exposing red flags so you can navigate the web with confidence.